Amazon announced that a Purdue student research team is one of ten international teams selected to participate in this year’s Alexa Prize TaskBot Challenge. The challenge focuses on developing multimodal (voice and vision) conversational agents that assist customers in completing tasks requiring multiple steps and decisions.

The company’s Alexa Prize is an industry-academic collaboration dedicated to accelerating the science of conversational artificial intelligence (AI) and multimodal human-AI interactions. The TaskBot Challenge, which began in January, builds upon the Alexa Prize’s foundation of providing universities with unique opportunities to test cutting edge machine learning models with actual customers at scale.

For the challenge, teams must build conversational agents, or “taskbots,” that assist customers in tasks requiring multiple steps — such as removing a stain or cooking a turkey — while also adapting to the items and tools the customer has on hand. If, for example, a stain removal tip called for white vinegar and the customer doesn’t have any, the taskbot should adapt to offer an alternate approach based on the items the customer does have.

“The Alexa Prize TaskBot Challenge combines a vast range of tasks over multiple domains with multimodal outputs,” said Rey (Alex) Gonzalez, graduate research assistant in Purdue’s Department of Computer and Information Technology. “This is the ultimate test for any moonshot concept, and we can’t wait to see what the real world has in store for us.”

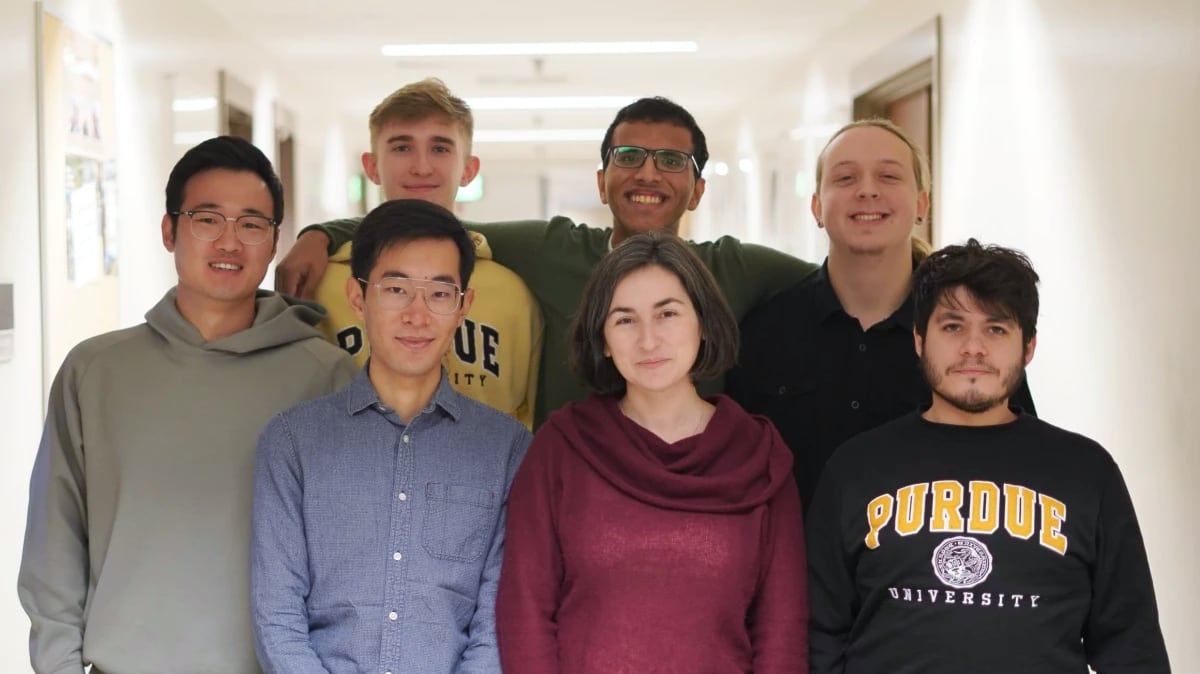

Gozalez is BoilerBot’s team leader. Yifei Hu and Damin Zhang, graduate research assistants in computer and information technology, and undergraduate students Jack Lambert (digital criminology), Jinen Setpal (data science) and Jacob Zietek (computer science) are team members.

Julia Rayz, professor of computer and information technology in Purdue’s Polytechnic Institute and director of the Applied Knowledge Representation and Natural Language Understanding Lab, is faculty advisor for Team BoilerBot.

“We hope to develop a task-oriented system that can interact with users based on their level of knowledge, experience and communication preference,” said Rayz.

The TaskBot Challenge also asks participating teams to incorporate visual aids into every conversation when a screen is available. In addition to verbal instructions, customers with Amazon’s Echo screen or FireTV devices may be presented with step-by-step instructions, images or diagrams that enhance task guidance.

The Purdue BoilerBot team is aiming for their taskbot to present information differently based on a user’s skill level, acting as a personalized mentor. The team also plans for skill level elicitation, through implicit and explicit interactions, to provide users additional personalization.

“Automated goal-directed interactions today often rely on the user to adapt to their resource, a cardinal sin of user interface and user experience design,” said Setpal and Zietek. “If something can go wrong, it will go wrong. The Alexa Prize TaskBot Challenge provides us the perfect playground to develop user-aware systems.”

Amazon will award $500,000 for the first-place team, $100,000 for second, and $50,000 for third. Those prizes will be paid out to the students on the teams with the best overall performance.

For having a team selected to participate, Purdue will receive a $250,000 research grant, Alexa-enabled devices, free Amazon Web Services (AWS) cloud computing services to support their research and development efforts, access to Amazon scientists, the CoBot (conversational bot) toolkit and other tools such as automated speech recognition through Alexa, neural detection and generation models, conversational data sets, and design guidance and development support from the Alexa Prize team. The challenge is in its second year; BoilerBot is Purdue’s first participating team.

See the full story from Amazon Science.

Additional information

- Amazon launches Alexa Prize TaskBot Challenge 2 (Amazon Science)

- Ten university teams selected for Alexa Prize TaskBot Challenge 2 (Amazon Science)

- Purdue Team BoilerBot (Amazon Science)

- Purdue Applied Knowledge Representation and Natural Language Understanding Lab